summary

This is a help page for Data Settings for ETL Configuration from PostgreSQL.

constraints

- none in particular

Setting items

STEP1 Basic settings

| item name | indispensable | default value | Contents |

|---|---|---|---|

| PostgreSQL Connection Configuration | Yes | - | Select the previously registered Connection Configuration that has the necessary permissions for this ETL Configuration. |

| database | Yes | - | Specify the name of the database containing the data you wish to retrieve. |

| schema | Yes | public | Specify the name of the schema containing the data you wish to retrieve. You can also load a list from the Load Schema List. |

| Transfer Method | Yes | Transfer using query | Select either Transfer or Incremental Data Transfer****using the query. For more information on Incremental Data Transfer, see Incremental Data Transfer Function. |

| query | Yes | - | Enter if you selected transfer using query as the transfer method. Enter SQL to retrieve transfer data. |

| table | Yes | - | Enter if Incremental Data Transfer is selected as the transfer method. Enter the name of the table containing the data you wish to transfer. |

| Default time zone | Yes | UTC | Specifies which time zone is used for columns of data type date, timestamp, or datetime. |

STEP2 Detailed settings

| item name | default value | Contents |

|---|---|---|

| Number of records processed by the cursor at one time | 10000 | You can specify the number of rows to be retrieved at a time by the cursor. |

| Connection timeout (sec) | 300 | You can specify the time in seconds before timeout on connection. |

| Socket timeout (seconds) | 1800 | Socket timeout can be specified in seconds. |

Data type that needs to be converted to string type

Some data types are not supported on the embulk side.

Therefore, it is necessary to convert it to a string type that can be supported by embulk.

if unsupported data type is included

If columns of unsupported data types are included in the transfer, the following error occurs during preview and transfer

Unsupported type interval (sqlType=1111) of 'interval_data' column.

How to convert column data types

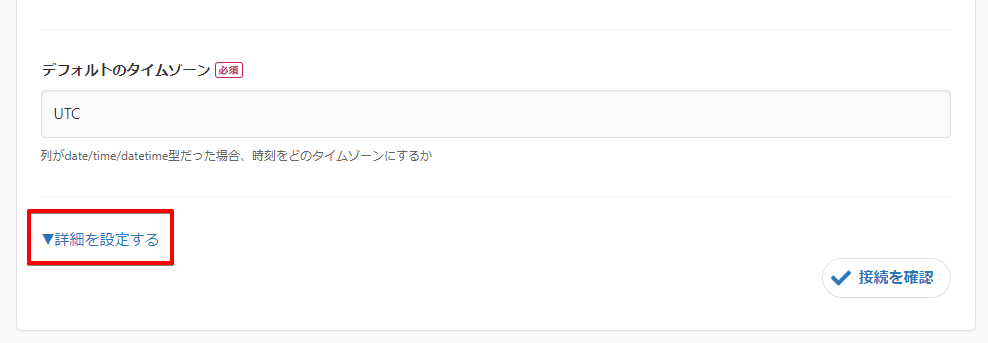

Clicking on "Set Details" in STEP 1 of ETL Configuration Editing will expand the Imported Data Type designation.

Specify the string type for columns that need to be converted to the string type described below.

List of data types that need to be converted to string type

The following data types must be imported as string types

- bit varying

- box

- bytea

- cidr

- circle

- inet

- interval

- line

- lseg

- macaddr

- macaddr8

- money

- path

- pg_lsn

- point

- polygon

- tsquery

- tsvector

- txid_snapshot

- xml